Nvidia has made a game-changing move in the realm of AI infrastructure by open sourcing the KAI Scheduler, a groundbreaking component of the Run:ai platform. This initiative is not just about making a piece of software available; it embodies Nvidia’s robust commitment to fostering innovation and collaboration within the AI community. By releasing KAI Scheduler under the Apache 2.0 license, Nvidia is embracing open-source principles that promise to democratize access to advanced AI tools and provide vital support to both enterprise systems and individual developers.

In a field where technology is evolving at lightning speed, the demand for efficient resource management is paramount. Traditional methods of scheduling computing resources, especially in AI workloads running on GPUs and CPUs, have often struggled to keep pace with the dynamic nature of machine learning tasks. Herein lies the brilliance of the KAI Scheduler; it was meticulously crafted to address the inherent challenges of fluctuating GPU demands and streamline access to compute resources.

Solving the Bottleneck: Real-Time Resource Allocation

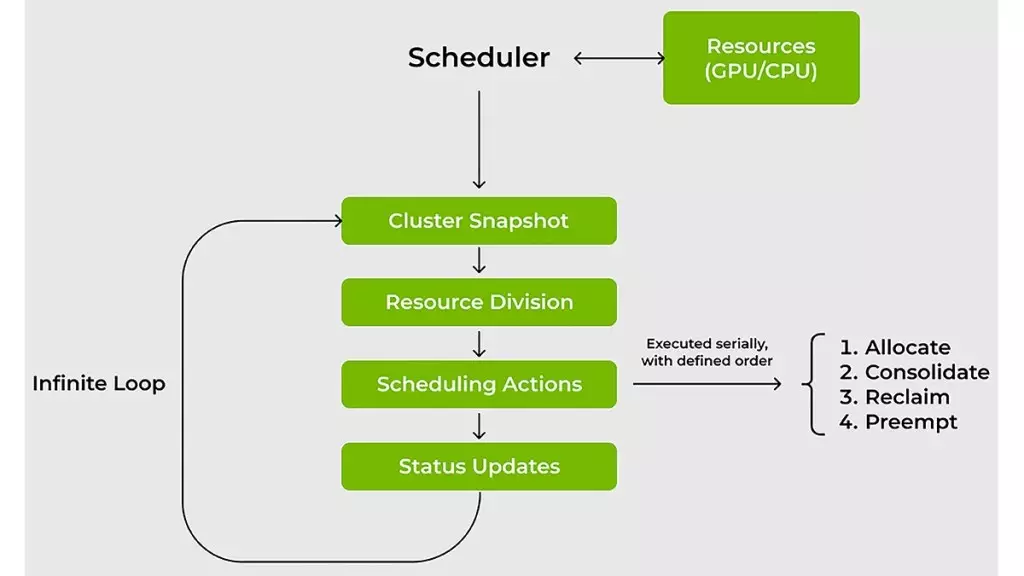

One of the standout features of the KAI Scheduler is its capability to adapt to changing workload requirements in real-time. Unlike conventional schedulers that frequently require manual tuning, the KAI Scheduler employs advanced algorithms to continuously recalibrate fair-share values and dynamically adjust quotas. This means that as the demands on the system shift—perhaps moving from a single GPU for a data exploration task to multiple GPUs for heavy-duty training—the KAI Scheduler can automatically respond and allocate resources, significantly reducing wait times and enhancing productivity.

For machine learning engineers, this time-saving functionality translates directly to accelerating development cycles. The scheduler’s implementation of gang scheduling and hierarchical queuing allows users to batch-process tasks, assuring that their jobs commence as soon as resources become available. This seamless orchestration of tasks means that data scientists can invest their time where it counts—on the actual experimentation and innovation, rather than on waiting for resources.

Combatting Resource Fragmentation and Ensuring Fairness

Resource fragmentation is one of the most pressing issues when managing large-scale computing environments. Traditional approaches often lead to inefficiencies as smaller tasks are left stranded in partially filled resources, waiting for adequate workloads to occupy them. The KAI Scheduler counters this fragmentation through innovative strategies like bin-packing and consolidation, which efficiently manage the allocation of tasks across GPUs and CPUs.

Moreover, the scheduler adopts a fairness-centric approach by enforcing resource guarantees. This means that teams working on AI projects won’t have to contend with rampant resource hogging by others, which has historically led to wasted potential in shared clusters. Instead, they are assured access to the compute resources they need, with idle resources intelligently reallocated to optimize cluster efficiency.

Simplifying AI Workflows: The Built-in Podgrouper Feature

The KAI Scheduler also alleviates a common pain point faced by AI practitioners: the complex web of configurations required to connect workloads with various AI frameworks. Utilizing traditional methods can feel like navigating a labyrinth, resulting in protracted development timelines. By integrating a built-in podgrouper, KAI Scheduler simplifies this process, automatically identifying and linking with tools such as Kubeflow and Ray. This automated functionality not only reduces the setup burden but also accelerates the prototyping phase, empowering teams to realize their ideas more quickly.

The implications of these enhancements are profound; by streamlining workflows and resource management, Nvidia is paving the way for a more collaborative and efficient AI landscape. This progressive stance on open-source software reignites discussions around innovation and shared knowledge within the tech community, marking a significant shift forward.

In essence, Nvidia’s KAI Scheduler represents not just a technological advancement but a new philosophy—one that prioritizes agility, collaboration, and accessibility in the ever-evolving landscape of artificial intelligence and machine learning. As more organizations embrace this open-source framework, we can anticipate a future where AI capabilities expand exponentially, driven by community innovation and shared achievements.